Morning Glory at Noon — Ch.8: Bits of Morning Glory (Part 2)

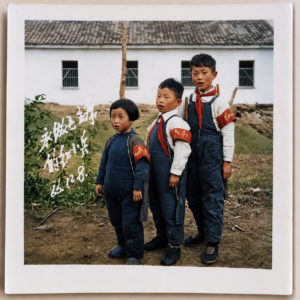

"Never Forget Class Struggle"

I remember it was around 1970, when I was in fourth or fifth grade. Our teacher led us to visit a "Class Struggle Exhibition Hall" to give students a living education. The exhibition hall had explanatory panels, diagrams, and physical objects, all designed to make us feel that class struggle was right beside us — something that needed to be talked about every year, every month, every day.

The first thing we saw was a landlord's "heaven-changing ledger." It was an old land deed that had been dug out from a landlord's cellar. Keeping the deed, naturally, meant he was waiting for the day heaven would change so he could settle accounts with the poor and lower-middle peasants. The guide's script said this old landlord, who normally nodded and bowed before everyone, was in fact old and crafty, his crimes deserving of death ten thousand times over.

There was also the diary of a so-called "rightist who slipped through the net." The commentary said this outwardly respectable teacher had a dark psyche. The several thick volumes of diaries seized from his home were filled with the cloying, decadent sentiments of the bourgeoisie — the diary recorded the author's experiences of romantic love. Even more despicable, it contained poems longing for the Kuomintang reactionaries. The item on display was a poem titled "Yearning for the Sea." What I saw was a lyrical prose poem in elegant handwriting. The parts I vaguely remember went something like: "Oh sea, my homeland, my destination, my longing, my hope!" The entire piece revolved around the theme of the sea. The guide's script asked: why would this rightist who slipped through the net so nauseatingly extol the sea? Obviously, he was yearning for the Kuomintang bandits across the sea in Taiwan, hoping they would launch a counterattack on the mainland. At the time, none of us doubted that the sea in "Yearning for the Sea" symbolized the unnameable Kuomintang. He was one of the "Stinking Ninth Category" of intellectuals, with bourgeois sentiments — surely he was dissatisfied with reality, and his class nature determined that he yearned for the Kuomintang reactionaries. Wasn't this the kind of ill-concealed intention that everyone could see?

The most explosive item in the exhibition was the material of an active counter-revolutionary case: a draft party platform for an underground counter-revolutionary organization called the "Democratic Justice Party." The two principal culprits — the party's chairman and vice-chairman — had just been sentenced to death at a public trial, paraded through the streets, and publicly shot. The party's platform was to overthrow the Communist Party and establish democratic politics. This was, of course, a heinous and unforgivable heresy — nothing less than execution would satisfy the people's righteous anger.

On the day of the annual public sentencing, under a blazing sun, our small county town in the mountains of southern Anhui buzzed with a festival-like excitement. The public trial was held at the town's largest athletic field, grandly known as "Zhongshan Park." Several thousand people packed the field so tightly not a drop of water could seep through. The criminals, heads shaved and heavy placards hanging from their necks, were escorted onto the stage. After sentencing, the placards of those condemned to death were marked with a red cross before the parade through the streets. What interested everyone most was the death penalty — the kind of event that could thrill a crowd. Seven or eight criminals were sentenced to death on the spot, including the two young counter-revolutionaries, several murderers, and a production team leader convicted of "seriously undermining the Up to the Mountains and Down to the Countryside campaign" — a crime that referred to using one's position to rape or seduce young women sent down to the countryside. Each time a death sentence was announced, two burly men behind the condemned man on stage would force his head down and stuff something into his mouth to prevent him from struggling at the last moment or shouting counter-revolutionary slogans. The death-row prisoners' varied reactions were a major spectacle. Some collapsed into a heap on the ground and had to be kicked and dragged before they could just barely kneel onstage for the public shaming. Others struggled with all their might — their heads forced down, then raised again. It was said that these were the type most likely to shout counter-revolutionary slogans if not gagged.

After the public sentencing concluded, the parade through the streets began. Four or five criminals were pressed against the front railing of each truck as it slowly rolled down the county's main street. Nearly everyone who could leave their home came out. Those who hadn't made it to the field to watch the trial live had long since staked out good spots along the main street near their homes, waiting for the parade convoy. The young people, bursting with energy and excitement, simply followed alongside the trucks. The clever ones brought bicycles so they could catch up with the best part — the execution scene. Although these were public executions and onlookers were tolerated, the execution site was kept secret, presumably to prevent overcrowding that might interfere with official business. Executions were generally carried out within an hour after the parade. Based on past experience, there were two or three likely execution sites about ten li outside town, and people were stationed at each one, waiting like the proverbial farmer who saw a rabbit dash into a tree stump and decided to wait there for the next one. I was not so clever. Carried along by the crowd, I rushed east then west, and by the time I finally made it to the scene, there was nothing but heads upon heads — the proceedings were already over. People formed circles, listening to those who had witnessed the execution with their own eyes describe every detail. After the execution, a medical examiner in a white coat would verify the death on site and sign the death report. Later, a rumor spread that the dictatorship authorities demanded that the families of executed counter-revolutionaries pay for the cost of the bullet. At the time, we thought this was perfectly reasonable. A bullet might not be worth much, but this was a just punishment for the counter-revolutionary's family.

Many years later, I still wonder whether a thirst for blood is rooted in human nature. How else to explain the excitement and frenzy of the spectators at the execution ground? There was a saying back then: the revolutionary masses' day of carnival is the class enemy's day of suffering.

Speaking of bloodthirst, I'm reminded of La Espero ("The Hope"), by L.L. Zamenhof, the creator of Esperanto. This poem became the anthem of Esperantists worldwide — their "Internationale." I once had my master's machine translation project translate this song into English and Chinese:

(099) LA ESPERO : ESPERANTISTA HIMNO ( POEMO FAR ZAMENHOF ) .

(100) EN LA MONDON VENIS NOVA SENTO ,

TRA LA MONDO IRAS FORTA VOKO ;

(101) PER FLUGILOJ DE FACILA VENTO ,

NUN DE LOKO FLUGU GHI AL LOKO .

(102) NE AL GLAVO SANGONSOIFANTA ,

GHI LA HOMAN TIRAS FAMILION ;

(103) AL LA MOND' ETERNE MILITANTA ,

GHI PROMESAS SANKTAN HARMONION .

(099) THE HOPE : ESPERANTIST'S HYMN ( POEM BY ZAMENHOF ) .

(100) INTO THE WORLD CAME NEW FEELING ,

OVER THE WORLD GOES STRONG VOICE ;

(101) BY WINGS OF EASY WIND ,

NOW FROM PLACE LET IT FLY TO PLACE .

(102) NOT TO SWORD BLOODTHIRSTY ,

IT PULLS THE MAN FAMILY ;

(103) TO THE WORLD EVER FIGHTING ,

IT PROMISES SACRED HARMONY .

(099) 希望: 世界语者的颂歌 (柴门霍夫所作的诗歌)。

(100) 新感觉来到了世界,

有力的声音走遍世界;

(101) 用顺风的翅膀,

现在让它从一个地方飞到另一个地方吧。

(102) 它不把人的家庭

引到渴血的刀剑;

(103) 向永远战争着的世界,

它允诺神圣的和谐。

— Written on May 18, 2006

Homespun and Factory Cloth

When I was a child, in the 1960s and 1970s, I still wore clothes made of tubu — "homespun" cloth. This was fabric hand-woven by farmers, bought and then sent to the dyeing workshop to be dyed blue or black. It was coarse, not sturdy, and tore easily, so you had to patch it to wear it for long. There were no patterns, of course. The dyeing workshop was like a giant bathhouse, thick with steam. The dyed cloth bled color badly, often staining other clothes black.

Later, in the late 1960s, state-supplied machine-woven khaki fabric — which required ration coupons — began to appear. It was much nicer-looking and sturdier. Since it required coupons, homespun didn't immediately exit the market. Still later, synthetic fabrics like diqueliang (Dacron) and nylon began arriving. I remember the first time my parents bought Dacron cloth to make shirts for us brothers — around 1970 — I flatly refused to wear it, thinking it was too revisionist, so shiny, like what a bourgeois young master would wear. From childhood we were taught to learn from Lei Feng's example of hard work and plain living: "three years new, three years old, another three years of mending." Wearing nylon socks for the first time also felt too extravagant, yet they felt wonderfully comfortable once on. (Only later did I discover they weren't breathable and caused foot odor.)

Synthetic fabrics became popular in the 1970s. Their greatest advantage was durability — Grandma no longer had to spend the whole year mending the family's clothes, shoes, and socks. Around that time, Japan began exporting a chemical fertilizer called "Urea," and the farmer brethren discovered that the fertilizer sacks were excellent synthetic fabric. They eagerly turned urea sacks into bed sheets and blankets — and they worked surprisingly well. The only drawback was the giant Chinese characters reading "UREA" that accompanied people into their dreams every night. Later, when reading about the history of DuPont's invention of nylon in the 1930s, I learned that American GIs in places like the Philippines used nylon goods as gifts to woo local girls — enormously popular.

By the late 1970s, you could still occasionally see homespun cloth, but as the price of factory cloth fell and ration coupons were abolished after the Cultural Revolution, homespun simply couldn't compete.

How times change. Today in the West, handmade products of pure cotton, pure linen, or pure silk have become fashionable — things only bourgeois young ladies and gentlemen can afford, while the impoverished proletariat must make do with cheap, shiny, durable synthetics. My online friend Xiaoshan tells me that homespun-style products are now quite expensive. There's a children's clothing brand called Hanna Andersson that touts its "organic cotton" and charges a premium for it. At Whole Foods, a shirt of the most ordinary linen design sells for nearly two hundred dollars. Xiaoshan says: "I think clothes made from handwoven cloth — rough cotton shirts, casual pants, women's skirts — would be absolutely cool. No one else would be wearing the same thing as you." What was once an unavoidable hardship has now become a trend.

— Written in October 2011

The Art of Arguing

One of the pleasures of being online is watching the "old-timers" argue. Old-timers don't like to argue, but once they get going, their sharpness never loses its humor, and you often can't help but laugh. Some of the young folks' arguments, on the other hand, leave much to be desired — foul language, zero technical content, let alone humor — worse than a fishwife's street brawl. The times have changed, heaven and earth have turned upside down, yet the quality of arguing has not improved. Maybe I'm just a cranky old man, but I always feel today's young people can hardly reach the "self-oblivious" realm of arguing that we attained back in our day. Old ginger is still the spiciest. Yesterday, online, I saw our elder brother talking nonsense — probably a bit drunk. I couldn't resist jumping in with a jab, fully expecting him to come after me. Unexpectedly, the old fellow was quite receptive, humbly accepting my opinion, and ended with: "Looks like I'll have to argue with myself now." Brilliant — now that's a realm! When arguing reaches such a state, it truly does justice to the brothers and sisters gathered around to watch. In my youth I was even more extreme — I argued so hard I actually changed my sex. Such self-oblivious passion could truly move heaven and earth and make ghosts weep.

I've loved to pick arguments since I was little — from elementary school through college, it never stopped. In elementary school I was a shrimp, not really able to get a word in edgewise. Still, having been through the revolutionary baptism of the Great Cultural Revolution, I especially loved going to the streets to listen to the young Red Guard debaters, and I admired the masters of debate with all my heart.

I remember in high school, during one of our "Learn from the Peasants" sessions, we were all staying at a farm when a great debate erupted in the dormitory one night: "Are humans animals?" Having thoroughly studied Marxism-Leninism, I knew that humans are the sum total of social relations, that humans use tools, and that this is the fundamental characteristic distinguishing humans from animals — and so on. I thought anyone who insisted that humans are animals must have water in their brain — practically mentally deficient. Armed with truth, righteous and stern, I never imagined my opponent would also be a fiercely competitive type who simply would not admit error. I was absolutely furious. Wave after wave, the debate went on the entire night. By daybreak, I already felt my breath failing, no longer knowing what I was shouting, still less able to hear what the other side was saying. Like holding a position, I felt the moment I let up, the enemy would seize the opening and pour in.

The next day, the debate finally stopped — not for any other reason, but because I had completely lost my voice. My throat was congested and inflamed, the pain unbearable. Classmates suggested I gargle with salt water, but it didn't help. For an entire week, I became a mute. Later, when I finally regained my voice — no one could have guessed — I had gone from a male voice to a female voice. Not the kind of pleasant, melodious female voice, mind you, but one closer to the old witch in Disney's Snow White.

I've loved music all my life. When I hear something that moves me, I can't help but sing along — I have to let it out to feel fully satisfied. I quickly discovered that my satisfaction was built on other people's suffering. Fortunately, I'm fairly self-aware. I voluntarily keep my distance from karaoke and only occasionally let loose at home. I'm deeply grateful to my wife and daughter, who are quite tolerant. "As long as we see you're happy," they say.

Now, whenever I'm on the phone and the other person says, "Yes, Madam," I'm reminded of my youthful, headstrong days.

— Written on December 11, 2008

Work-Study

Work-study during summer vacation was already popular when I was in middle school. My first job was helping sell pears at the collective supply-and-marketing co-op, at one yuan a day. The old clerk criticized me for being too honest, saying you had to size up the customer and shortchange them appropriately. Roughly speaking, for a jin the customer asked for, giving them eight liang was about right — and you had to make the scale beam look high, so the customer felt satisfied. This kind of petty swindling was the norm in a small-time collective enterprise like the supply-and-marketing co-op. I found that most customers were easy to fool; only a few were sticklers. If you got caught, you just pretended it was an honest mistake, smiled and made it right. That was my first lesson in life. Looking back, the old masters who taught me to shortchange customers were fundamentally good people, yet they carried out these deceptions as if they were perfectly natural and justified.

Later I worked twice as a "helper" at a rural grain depot, always doing the least skilled work, called daicang — leading peasants who had just had their grain weighed to the designated spot in the designated warehouse. I also helped the warehouse keeper shuffle the grain around. Grain in storage had to be regularly turned — the bottom brought up, the top sent down — to prevent mildew. This was fairly exhausting work. The air inside the warehouse was foul, thick with dust and haze.

My work-study experience after going abroad was following the herd during my studies in the UK — working in a restaurant, still doing the least skilled job of washing dishes. Weekend shifts ran from 4 p.m. to one or two in the morning, at fifteen pounds a shift. By the time I got home, I was falling apart. Anyone who has washed dishes in a restaurant will never again believe in restaurant hygiene, especially on weekends. Sometimes the water in the dishwashing sink wouldn't be changed the entire evening. When it got too dirty, you'd just pour in massive amounts of detergent until it was full of foam, then wipe things glossy with a dry cloth. It wasn't that we were lazy — we simply had no choice. Dirty dishes came flooding in like a mountain; there was no time to change the water. Some restaurants had dish-drying machines with a sanitizing cycle, which made things relatively more hygienic.

— Written on October 1, 2011

The Art of Drinking Beer

Twice in my life I have had beer that was unforgettable — truly fine brew. The first time was in 1989, when I went to Munich, Germany for a conference (see "Morning Glory at Noon: A Journey to Europe"). The conference organizers took us to the outskirts of the city for an outdoor beer festival. Before this, I had scarcely touched alcohol, but Munich's draft beer — such wonderful taste, and not intoxicating either — captivated me instantly. I also loved the atmosphere: beer mugs the size of buckets, and the meat that accompanied the drinking — roasted whole or half pigs and sheep — you couldn't ask for anything more magnificent. The utensils used to cut the meat were like the great swords our ancestors once swung at the Japanese devils' heads. You held out your plate and one swing of the blade sent two jin of meat onto it. Half-tipsy, half-dreaming, I was always reminded of the heroes of Mount Liang, weighing out silver by the scale and drinking wine by the bowl. On that midsummer night, fair-skinned girls in brightly colored, elaborately layered traditional dress wove through the crowd, all smiles. What night was this? I no longer knew where I was.

The second time was a few years ago in Hokkaido, also for a conference. An old Japanese friend took me to an antique-style beer house, where we savored Sapporo beer alongside simple snacks like boiled edamame. Sapporo is famous for two things: beer and king crab. Sapporo's dark draft was dry, mellow, and refreshing. Two large mugs down, I returned to the hotel with blurry, drunken eyes. Undressing and collapsing onto the bed, I felt my body floating upward, as if I'd just come out of a sauna — steaming all over, vapors rising, as though every impurity in my body was being purged. An indescribable sensation, as if about to take flight — "drifting as though having left the world behind, sprouting wings and ascending to the immortals."

A friend once asked: how exactly do you beer drinkers get a buzz out of it? My answer is: it's far more than a buzz — drinking beer is conducive to world peace. Every time I reach a mild tipsiness, I feel that all people are my family. Mild tipsiness, that floating sensation, is the optimal state. Li Bai, the great immortal poet of the Tang Dynasty, probably composed his timeless masterpieces like "Drinking Alone Under the Moon" in precisely this state:

A jug of wine among the flowers,

I drink alone, no friend close by.

I raise my cup to invite the moon,

Who with my shadow makes us three.

The moon, alas, knows not to drink;

My shadow follows me in vain.

With moon and shadow, friends for now,

Let's seize the joy before spring ends.

— Written in October 2011

朝华午拾 · 第八章:朝华点滴(下)

"千万不要忘记阶级斗争"

记得大概是1970年左右,小学四五年级的时候,老师带领我们参观一个"阶级斗争展览馆",对学生进行活生生的教育。展览馆里面有讲解、图示和实物,让我们感觉到阶级斗争就在身边,需要年年讲,月月讲,天天讲。

首先看到的是地主份子的变天账。这是从一家地主家地窖里面搜查出来的老地契。保留地契,当然是想变天,将来好对贫下中农反攻倒算。讲解词说,这个老地主,平时见人点头哈腰,其实是老奸巨猾,罪该万死。

还有另外一份"漏网右派"的日记。解说词说,这个道貌岸然的教师,心理阴暗,查抄出来的几大本日记,充满了卿卿我我的资产阶级腐朽没落的情调(日记记录了当事人的恋爱感受),更可恶的是还有向往国民党反动派的诗词。展出的部分就是这样一首题目叫做"海恋"的诗歌。我看到的是字迹娟秀的一首抒情散文诗,隐约记得的部分有,大海啊,我的故乡,我的归宿,我的向往,我的盼望!通篇就是大海这个主题。解说词说,漏网右派为什么如此肉麻地讴歌大海呢?很显然,他是向往大海那边的台湾国民党蒋匪,盼望他们反攻大陆。当年我们毫不怀疑《海恋》作者的大海象征着不能明说的国民党。他是臭老九,又有资产阶级情调,肯定对现实不满,阶级本性决定他向往国民党反动派。这难道不是「 司马昭之心」路人皆知么?

展品中最具有爆炸力的是一份现行反革命的材料,地下反革命组织"民主正义党"的党纲草案。两名主犯就是前不久公审宣判死刑被游街示众、当众枪毙的该党的主席和副主席。党纲宗旨是推翻共产党,建立民主政治。这当然是十恶不赦的异端,罪大恶极,不杀不足以平民愤。

一年一度的公审那天烈日炎炎,我们这个皖南山区的小县城,象过节一样热闹。公审在本城最大的操场(号称"中山公园")举行。几千人把操场挤得水泄不通。罪犯们剃光头,挂着大牌子被押上来,死刑犯的牌子上在宣判后游街时被划上红叉。大家最感兴趣的还是死刑这种可以给公众带来兴奋的事件。有七八个罪犯被当场宣判死刑,其中包括那两个年轻的现行反革命,还有其他杀人犯和一个严重破坏上山下乡的生产队长(破坏上山下乡罪是指利用职权强奸或诱奸下乡女青年)。每当宣判一个死刑,台上那个死刑犯就被身后两个彪形大汉摁住头颅,并往口中塞进物件,防止他们临死挣扎,呼喊反动口号。死刑犯表现各异,是一大看点。有的软瘫在地上,需要连踢带拉,才能勉强跪在台上示众。也有的竭力挣扎,头摁下去,又抬起来。说是这种人如果不封口,最可能呼喊反动口号。

公审大会结束后,是游街示众,每辆卡车前端押四五个罪犯,缓缓从县城大街上通过。全城能出来的人几乎都出来了,没有机会来操场看公审实况的,早早在家附近大街边上找好位置等待游街的车队。对于精力充沛、兴奋莫名的年轻人,干脆随着车队前行。有聪明的带上自行车,好赶上最精彩的执行枪毙的现场。虽然是公开处决,允许围观,但枪毙现场保密。大概是怕人满为患,影响公务。一般在游街以后一小时内执行枪决。根据以往经验,城外十里地左右,有两三个最可能的行刑现场,各处都有人守株待兔。我比较笨,随着人流东赶西赶,最后好不容易来到现场,除了人头还是人头,而且过程已经结束。人们围成一圈一圈,听亲眼目睹枪决现场的人描述每一个细节。行刑之后,有穿白大褂的法医现场验尸,签署死亡报告。后来有传言,说专政机构要求向被枪毙的反革命分子家属收取子弹费。我们当时觉得理所当然,子弹虽然不值钱,但这是对反革命家属的正当惩罚。

很多年过去,我一直怀疑,嗜血是否源于人的本性,否则如何解释行刑场上看客的兴奋和疯狂呢。当年就有这么个说法,革命群众的狂欢之日,就是阶级敌人的受难之时。

提到嗜血,想起世界语创始人Zamenhof的《希望之歌》。这首诗歌成为全世界世界语者的《国际歌》,我曾经在我的硕士机器翻译项目中把这首歌自动翻译为英语和中文:

(099) LA ESPERO : ESPERANTISTA HIMNO ( POEMO FAR ZAMENHOF ) .

(100) EN LA MONDON VENIS NOVA SENTO ,

TRA LA MONDO IRAS FORTA VOKO ;

(101) PER FLUGILOJ DE FACILA VENTO ,

NUN DE LOKO FLUGU GHI AL LOKO .

(102) NE AL GLAVO SANGONSOIFANTA ,

GHI LA HOMAN TIRAS FAMILION ;

(103) AL LA MOND' ETERNE MILITANTA ,

GHI PROMESAS SANKTAN HARMONION .

(099) THE HOPE : ESPERANTIST'S HYMN ( POEM BY ZAMENHOF ) .

(100) INTO THE WORLD CAME NEW FEELING ,

OVER THE WORLD GOES STRONG VOICE ;

(101) BY WINGS OF EASY WIND ,

NOW FROM PLACE LET IT FLY TO PLACE .

(102) NOT TO SWORD BLOODTHIRSTY ,

IT PULLS THE MAN FAMILY ;

(103) TO THE WORLD EVER FIGHTING ,

IT PROMISES SACRED HARMONY .

(099) 希望: 世界语者的颂歌 (柴门霍夫所作的诗歌)。

(100) 新感觉来到了世界,

有力的声音走遍世界;

(101) 用顺风的翅膀,

现在让它从一个地方飞到另一个地方吧。

(102) 它不把人的家庭

引到渴血的刀剑;

(103) 向永远战争着的世界,

它允诺神圣的和谐。

记于2006年5月18日

土布洋布

我小时候,1960-1970年代,还穿"土布"衣服,"土布"是农民手工纺织的,买回家,送进染坊去染成蓝色或者黑色。很粗糙,不结实,容易破,所以要补补丁才能穿久。当然没有花样。染坊象个大澡堂,热气熏天。染出来的布掉色得厉害,往往把其他衣服也带黑了。

后来,60年代后期,开始有需要布票的国家供应的机织咔叽布,漂亮结实多了。由于需要布票,所以土布没有立刻退出市场。再后来,化纤制品"的确良"和尼龙开始来了。记得第一次父母给我们兄弟买的确良做衬衫,大约是1970年左右,我坚决拒绝穿,觉得这太修正主义了,那么光亮,象资产阶级少爷。我们从小觉得要学习雷锋艰苦朴素,新三年,旧三年,缝缝补补又三年。第一次穿尼龙袜子也觉得太奢侈,可是感觉穿上以后,特别舒服。(后来才发现不透气,有臭脚的毛病。)

化纤制品的流行是1970年代,最大优点是结实,奶奶再也不用一年到头给全家缝补衣服鞋袜了。当时开始进口日本化肥"尿素",农民兄弟发现化肥袋子是很好的化纤制品,就纷纷拿尿素袋子做床单和被子用,还真好使。就是袋子上的硕大的汉字"尿素"每天伴随着人进入梦乡。后来,读30年代 DuPont 发明尼龙的历史,说美国大兵当年到菲律宾等处,就以尼龙制品作为礼物在当地泡妞,极受欢迎。

70年代末,偶然还看见有土布,但是因为洋布价格下降,文革后布票又取消了,土布就无法竞争了。

斗转星移,时事变迁,如今在西方,纯棉、纯麻或者纯丝的手工制品开始时髦,只有资产阶级小姐少爷才穿得起,而贫苦的无产阶级只能将就使用便宜、光鲜又结实的化纤制品了。网友小闪告诉我,现在"土布"制品可贵着呢,有一种品牌的童装HANNA ANDERSSON号称用"土布"(organic cotton)把价格提上去。WHOLEFOODS里卖的衣服,一件最普通样式的麻布上衣就卖近两百刀。小闪说:俺觉得自己织的布做粗布衬衫,休闲裤,女裙绝对cool,没人跟你穿一样的。过去无奈的事情现在变成时髦了。

记于2011年10月

掐架的境界

上网的好处之一是看"老人"掐架。老人不爱掐架,一旦掐起来,锋芒里不失幽默,常令人忍俊不住。不过,有些小年轻的掐架却不敢恭维,污言秽语,没有一点技术含量,更谈不上幽默,比泼妇骂街还不如。时代变迁,天翻地覆,可是掐架的水平却不见长。也许我是九斤老夫,总觉得现在的年轻人很难达到我们当年掐架的"忘我"境界。生姜还是老的辣,昨天在网上看到老大哥胡言乱语,许是喝多了,忍不住上去抢白他一句,自以为他要跟我急了,没料他老兄还很服气,虚心接受我的意见,最后来一句:"看来我得自己和自己掐了",绝啊,那是什么境界!掐架要是掐到这种境界,才不愧待围观的众兄弟姐妹们。我年轻时更绝,掐架甚至能掐到改变了性别,其忘我热忱,可谓惊天地,泣鬼神。

我从小就特别爱抬杠,从中小学到大学,一直如此。小学阶段我是班上小不点儿,不大插得上嘴。可还是经过了大革命的战斗洗礼,特别爱到大街上听小将们大辩论,对辩论高手佩服得五体投地。

记得是上高中的时候,有一次学农,大家住在农场,晚上在寝室爆发了一场"人是不是动物"的大争论。我因为熟读马列,知道人是社会关系的总和,人会使用工具,这是人区别于动物的根本性特征,等等。觉得坚持人是动物的同学,脑子被灌水了,简直是弱智。我真理在握,义正词严,没想到对手也是一个争强好胜的主儿,就是死不认错。简直气坏了,于是一浪高过一浪,辩论了整整一夜。到快天亮的时候,我已经感觉气接不上来,也不知道自己在嚷些什么,更听不进对方在说什么。象坚守阵地一样,感觉一旦松懈,敌人就会乘虚而入。

第二天终于停止争论了,不是为了别的,而是我完全失声了,嗓子充血,疼痛难忍。同学建议我用盐水漱口,也不管用。整整一个礼拜,我成了哑巴。后来好不容易恢复发声了,谁也想不到,我竟然从男声变成了女声-不是那种悦耳动听的女声,而是比较接近迪斯尼动画片"白雪公主"里面那个老妖婆的声音。

我一辈子爱好音乐,听到高兴处,忍不住要随曲而歌, 抒发一下才痛快。很快发现,我的痛快是建立在别人的痛苦之上。还好,我比较自觉,自觉与卡拉OK保持距离,只是偶而在家里抒发,很感激太太和女儿,她们比较谅解,说看到你高兴就好。

如今,每当我打电话听到对方跟我说:"Yes, Madam",我就想起我当年的年轻好胜来。

记于2008年12月11日

勤工俭学

中学生暑假勤工俭学,当年就很时兴。开始是去集体供销社帮助卖梨子,每日一元工钱。老店员批评我太老实,说要看顾客,适当克扣才好。大体是一斤,给八两就不错了,还要看上去,秤杆高高的,让顾客高兴。这种小的坑蒙拐骗,在小本生意作为集体企业的供销社,是常态。发现大部分顾客很容易上当,只有少数较真的。露馅了,就假装不小心弄错了,陪笑脸补足摆平。这是在生活中学的第一课。回想起来,传授责令我们克扣斤两的老师傅也都是善良的人,但是做起这些事情却理所当然天经地义的样子。

后来做了两次农村粮站的"协助员",一直做其中最没有技术的活,叫"代仓",就是领着农民把过完磅的稻子带到指定仓库的指定位置。平时也帮忙仓库保管员倒腾仓库。粮食在仓库要定期来回倒腾,底下的翻上来,上面的翻下去,防止霉变。这个活比较累人。仓库里面空气污浊,尘土飞扬,灰雾蒙蒙的感觉。

出国以后的勤工俭学是在英国留学时候随大流,去餐馆打工,仍然是最没有技术含量的洗碗工作。周末从下午4点干到夜里一两点,工钱是15英镑,回到家散架了一般。凡是干过洗碗工的人,再也不会相信餐馆的卫生,特别是周末。有时候洗碗池子的水一个晚上不换,实在太脏了,就使劲往里面倒洗涤剂,满是泡沫,用干布一抹就光洁起来。不是我们偷懒,实在没有办法,脏碗象山一样涌来,根本没有换水的时间。有的餐馆有烘碗机,多了一道消毒工序,才相对比较卫生一些。

记于 2011年10月1日

喝啤酒的境界

我一辈子有两次喝啤酒,难以忘怀,好酒啊。第一次是1989年,去德国慕尼黑开会(见《朝华午拾:欧洲之行》)。大会把我们拉到一个郊区,参加一个室外的啤酒节狂欢。此前,我几乎不沾酒,可是慕尼黑的生啤酒,口感真好,也不醉人,一下子就迷上了。也很喜欢那个场景,啤酒杯子海大,那助酒的肉食,是烤熟的或整条或半条的猪啊羊啊,别提有多大气。切割肉食的用具,跟当年向鬼子头上砍去的大刀似的,你端过盘子去,一刀就是两斤肉下来。微醉微醺之间,总使我联想起梁山好汉大秤分金银,大碗吃酒肉的痛快。仲夏之夜,有身着艳丽繁缛的传统民族服装的白人姑娘在身边穿来穿去,笑容可掬。今夕何夕,不知身在何处。

第二次喝啤酒,是前几年到北海道,也是开会。跟日本老朋友到一个古色古香的啤酒屋,就着煮毛豆之类的特色小菜,品尝扎幌啤酒。扎幌两大宝:啤酒和大毛蟹。扎幌的黑生啤,干醇爽口。两大杯啤酒下肚,醉眼迷蒙地回到旅馆。脱衣上床,感觉人直往上飘,象刚从桑那浴出来一样,全身蒸腾,呼呼地向上冒气,仿佛要把身体里面所有杂质清理尽净。不可言传的感受,好像要飞起来,"飘飘乎如遗世独立,羽化而登仙"。

有朋友问:你们喝啤酒的, 倒底是怎么喝出快感的?我的回答是,岂止是快感,喝啤酒有利于世界和平。每次喝到微醉时,感觉所有人都是亲人。以微醉为度,飘飘欲仙是最佳状态,唐代大诗仙李太白大概就是在这个状态下写出他的《月下独酌》等千古名作的:

花间一壶酒,独酌无相亲。

举杯邀明月,对影成三人。

月既不解饮,影徒随我身。

暂伴月将影,行乐须及春。

记于2011年10月

From 朝华午拾. Original Chinese: 朝华之八: 朝华点滴.

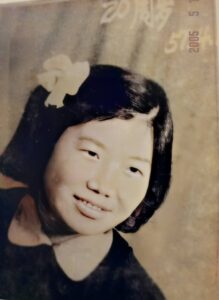

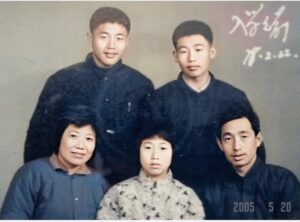

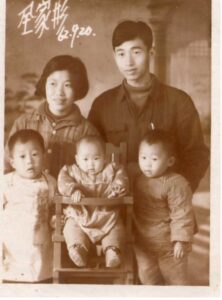

全家包括外婆和老姨,以及邻居至友何妈妈小卉姐在家门前合影,1969

全家包括外婆和老姨,以及邻居至友何妈妈小卉姐在家门前合影,1969